Before you start: what do you know about SEO split-testing? If you’re unfamiliar with the principles of statistical SEO split-testing and how SplitSignal works, we’re suggesting you start here or request a demo of SplitSignal.

First, we asked our Twitter followers to vote:

Only 33% of our followers guessed it right! The result was negative.

Read the full case study to find out why.

The Case Study

Business intelligence, sales prospecting and competitive insights are all keys to growing a business. Since Semrush has a shared mission of helping businesses grow, SplitSignal partnered with one of the largest B2B providers of company and employee contact information. In this experiment, we tested title tags with 2 key changes.

The Hypothesis

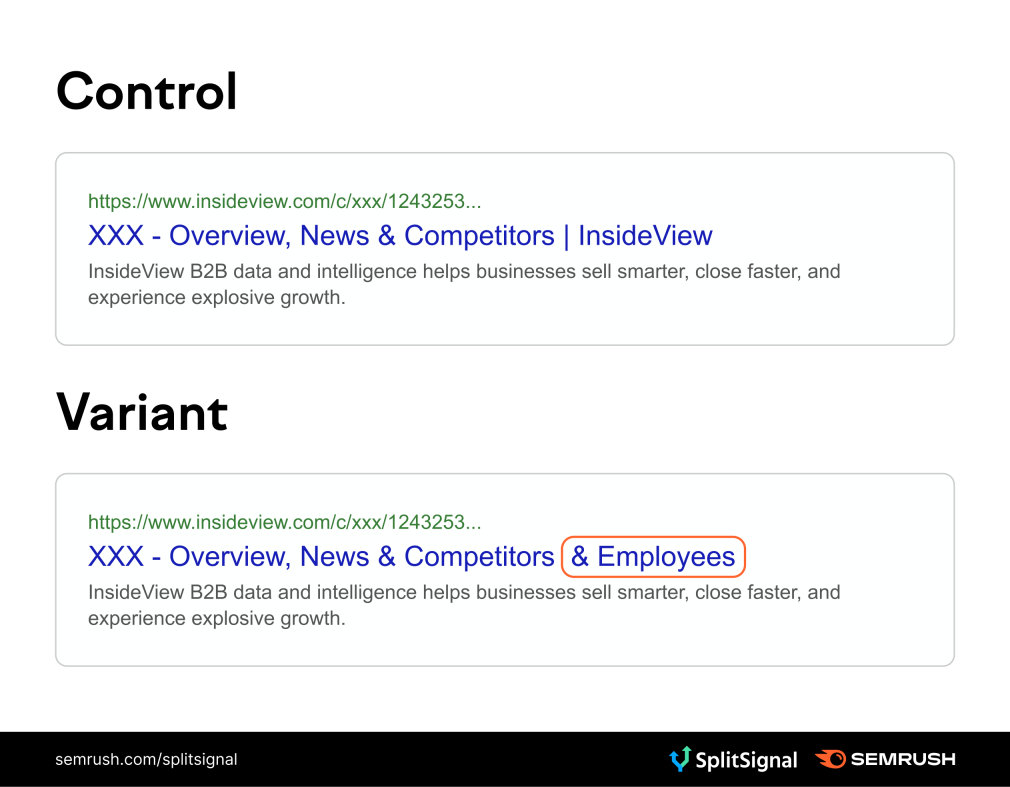

Our hypothesis states that since many users are looking for employee information, adding terminology that connects to that user intent would lead to a higher CTR and thus more traffic. As part of this experiment, we also removed the brand name from the title tag to allow for more room to fit in the word “Employees.”

The Test

We used SpitSignal to identify 4,919 pages that would be part of our test. We utilized 2,471 of those pages as the control group and then executed a change on 2,448 variation pages. To make sure changes are seen in SERPs, SplitSignal also measures whether Googlebot has seen the variant pages since launch. In this case, Googlebot visited 97% of the variation URLs.

Our test optimization consisted of adding the word “Employees,” as our hypothesis stated that this was part of the reason people are searching for these pages. We also removed the brand name in order to afford room for the addition of the word “Employees.”

The Result

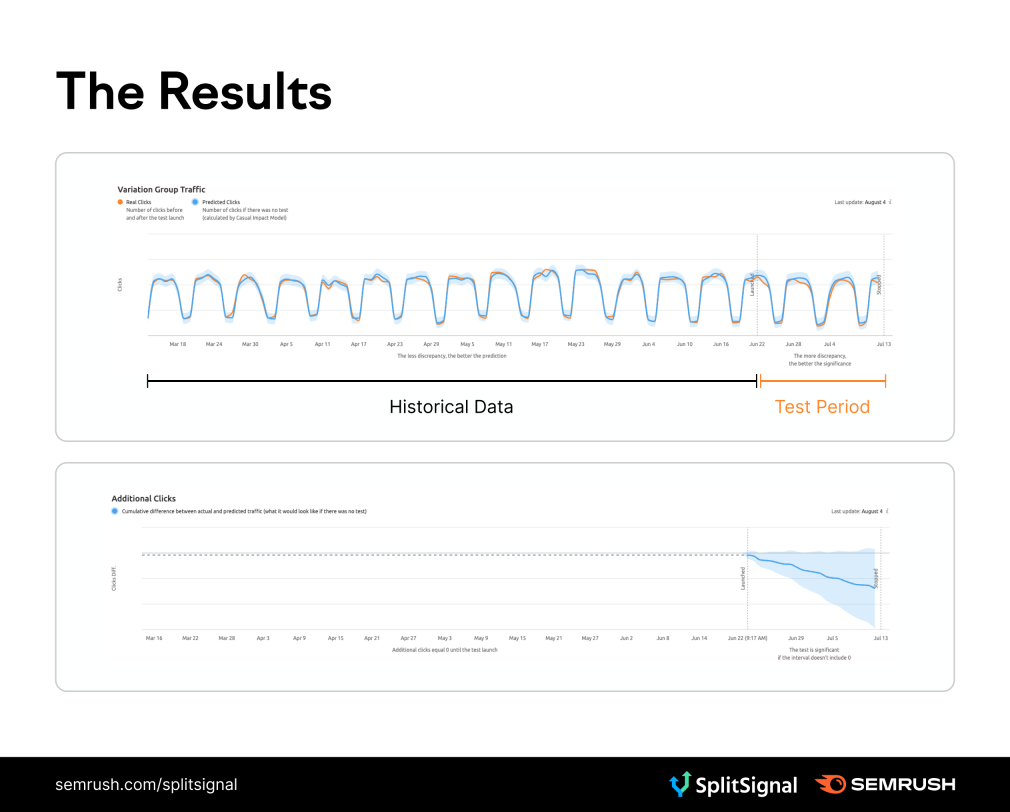

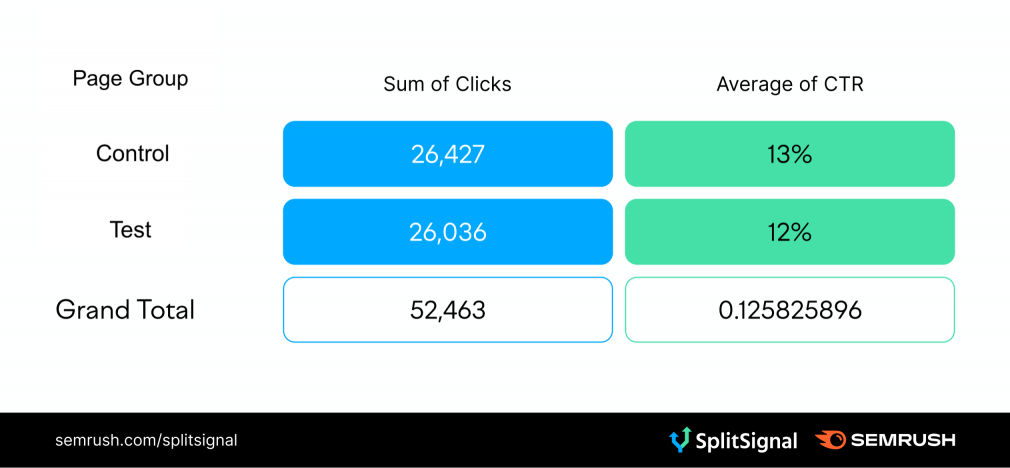

The test ran for 21 days from June 22nd through July 13th. This title tag change had a negative outcome, resulting in 3,695 (-6.2%) fewer clicks for the variant group compared to the control group. This test result has a 96% confidence level, indicating a strong degree of confidence in these results.